Particle lighting

I put together an implementation of the particle shadowing technique NVIDIA showed off a while ago. My original intention was to do a survey of particle lighting techniques, in the end I just tried out two different methods that I thought sounded promising.

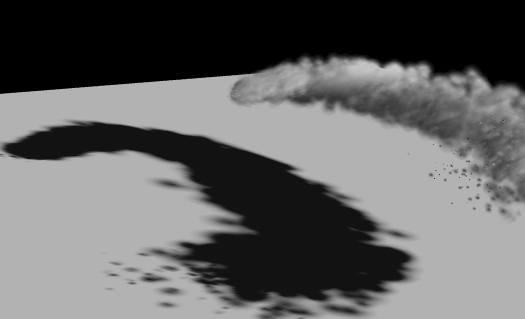

The first was the one ATI used in the Ruby White Out demo, the best take away from it is that they write out the min distance, max distance and density in one pass. You can do this by setting your RGB blend mode to GL_MIN, your alpha blend mode to GL_ADD and writing out r=z, g=1-z, b=0, a=density for each particle (you can reconstruct the max depth from min(1-z), think of it as the minimum distance from an end point). Here's a screen:

The technique needs a bit of fudging to look OK. Blur the depths, add some smoothing functions, it only works for mostly convex objects, good for amorphous blobs (clouds maybe). Performance wise it is probably the best candidate for current-gen consoles.

http://ati.amd.com/developer/gdc/2007/ArtAndTechnologyOfWhiteout(Siggraph07).pdf

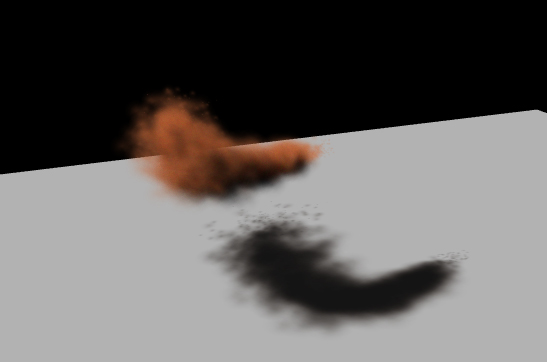

IMO the NVIDIA technique is much nicer visually, it gives you fairly accurate self shadowing which looks great but is considerably more expensive. I won't go into the implementation details too much as the paper does a pretty good job at describing it.

http://developer.download.nvidia.com/compute/cuda/sdk/website/projects/smokeParticles/doc/smokeParticles.pdf

The Nvidia demo uses 32k particles and 32 slices but you can get pretty decent results with much less. Here's a pic of my implementation, this is running on my trusty 7600 with 1000 particles and 10 slices through the volume:

Unfortunately you need quite a lot of quite transparent particles otherwise there are noticeable artifacts as particles change order and end up in different slices. You can improve this by using a non-linear distribution of slices so that you use more slices up front (which works nicely because the extinction for light in participating media is exponential).

Looking forward to tackling some surface shaders next.